The

dream

collection

This is a space for AI exploration. A collection of tools & tips for creative applications, strategic dreaming and context for the technology.

To understand the why or why not, see my post dreams are contagious – this page focusses only on what & how.

Last update (still very much in progress!): 23.01.2024

DREAM IT

WHOLESOME

INCLUSIVE

JOYFUL

DREAM IT

WHOLESOME

INCLUSIVE

JOYFUL

Multi-modality

Google Gemini

Gemini is built from the ground up for multimodality — reasoning seamlessly across text, images, video, audio, and code.

deepmind.google – introduction

bard.google.com – use it for free

Pictures

Midjourney

Creates wild images. Consistency can be a challenge, behaves like an untamed artist, running off with your prompt and reading something surprising into it. Mostly in a good way. Can utilise a reference image. Is more tedious to use (as a Discord chat bot), offers some nice parameters for advanced users though. Functionality has been expanded recently to replacing regions, zooming out or in. 3D and movie clips are supposedly in the making as well.

midjourney.com – official website

Discord – chat platform needed to access Midjourney

Marigold guide – visual guide to paremeters

Style prompts (Pastebin) – for inspiration

OpenAI DALL•E 3

Creates square images. Or adds stuff around existing images (outpainting) or replaces something in them (impainting). While quality depends on the scene, solutions are pretty straightforward. The interface is among the easiest to use.

labs.openai.com

bing.com/images/create – use it for free

StableDiffusion

Creates any images. Can impaint and outpaint. Can be trained on a set of images (your selfies, your dog, selfies of both you and your dog) to reimagine them in a different scenery or style. Is partly Open Source and has a vibrant community. Has a lot of fine-tuned models. Has some easy options for setting it up yourself at home, although the quality is not on the same level compared to a server farm.

beta.dreamstudio.ai – official application

diffusionbee.com – macOS app (very easy)

civitai.com/models – custom models from the community

AUTOMATIC1111 – a popular interface for setting it up yourself (rather nerdy)

Adobe Firefly

This recent addition to the Adobe Creative Cloud varies between StableDiffusion and Midjourney in quality. The Generative Fill is a strong tool and well positioned within Photoshop.

firefly.adobe.com – web app

Generative Fill – utility in Photoshop

Krea

Offers real-time generation, upscaling & enhancements, pattern generation and more.

krea.ai – free (limited)

Utilities

Magnific – best photorealistic AI upscaler & enhancer for now

Realesrgan – makes images larger

Prompt examples

Here are a few prompt styles that worked well for me using Midjourney. The community is a great place for continous exploration of styles, keywords and strategies to optimize prompts. As you can see, anything from icons to art and photorealistic portraits can already be generated today.

While text in images has yet to be mastered and the details are often still a bit wonky – as of 2023, this is addictive to the point that I’m throwing money at these companies. And if the past is any indication, expect things to improve, fast.

cargo bike rental, minimalistic, minimalist company logo, line art, vector, Ul UX, web design, logo, icon, SVG, asset --v 4 --q 2

cute cargo bike, boho style illustration, abstract, minimalistic, high res --v 4 --q 2 --ar 3:2

surreal collage, minimalist nature, funky color, broad scenery, surrealistic, --v 4 --q 2 --no car

urban gardening project, chilling out, Berlin, tilt shift --v 4 --q 2 --no car

{URL to stock photo of balkonkraftwerk as reference} the neighbours modern balcony across the street, solar panels attached to the outside wall or parapet, full with green plants and wooden shelves, cloudy bright daylight summer afternoon, cozy urban lifestyle, intricate surface details, shutter speed 1/400, shot on IMAX 70mm, high resolution, fine grain --v 4 --q 2 --no closeup

studio photograph of a friendly lovely kindergarten teacher + smiling kindly + positive good vibes + fine ultra-detailed realistic hair + ultra photorealistic + Hasselblad H6D + high definition + 8k + cinematic + color grading + depth of field + photo-realistic + film lighting + rim lighting + intricate + realism + maximalist detail + very realistic + photography by Carli Davidson, Elke Vogelsang --v 4 --q 2 --ar 3:2

What are your ideas?

Which applications would you like to see? What would you like to explore? What are your experiences with AI?

Video

Pika

Newest but very capable kid on the block.

RunwayML

RunwayML offers tools to generate videos or animate existing pictures. While flickering & artefacts still point to its artificial origins, movement and decent temporal coherence are bringing lot of life to images already.

RunwayML Gen-2 – their latest algorithm

Research papers

There is a lot of great research happening which has not (or never might) culminated in tools accessible to the public yet. Here is an example from Google (February 2023):

Text

OpenAI GPT-X

Experimenting with OpenAI's text transformers for the first time felt like magic. We realised the Mother Earth Telephone (2022) with GPT-3. This platform is mostly targeted at the developer community.

platform.openai.com – developer website

OpenAI ChatGPT

Made AI go mainstream. Writes text. Will propose a structure for your presentation on any given topic (even something non-existent), reorder data for you, try to explain anything, rhyme, code or rewrite something into medieval words. Quite fun and surprisingly still free. Here is a collection of prompts & perspectives for inspiration.

chat.openai.com – official website

Anthropic Claude

Text-based assistant.

Open Source models

Llama, Falcon, GPT-Neo-X... following the success of OpenAIs GPT-X, the community has already built their own Open Source models to run offline and local on your own computer.

lmstudio.ai – easy setup

Voice

ElevenLabs

One of the leading & publicly available voice generation platforms.

Here is another example of existing tech:

Music

Coming soon

This section is still work in progress. Here is a research paper with samples: Google MusicLM.

But how does it work?

Algorithms are trained on astronomic amounts of data. Artificial neurons, similar to neurons in the human brain, learn to distinguish features like objects, lighting, styles or relations things. For example, they learn anything about a house or plant and that sometimes plants happen to be around or in a house. We can then ask the neuron model to imagine a house. Instead of copy-pasting bits and pieces “from memory”, the model will come up with its own remixed version, its best interpretation of a house.

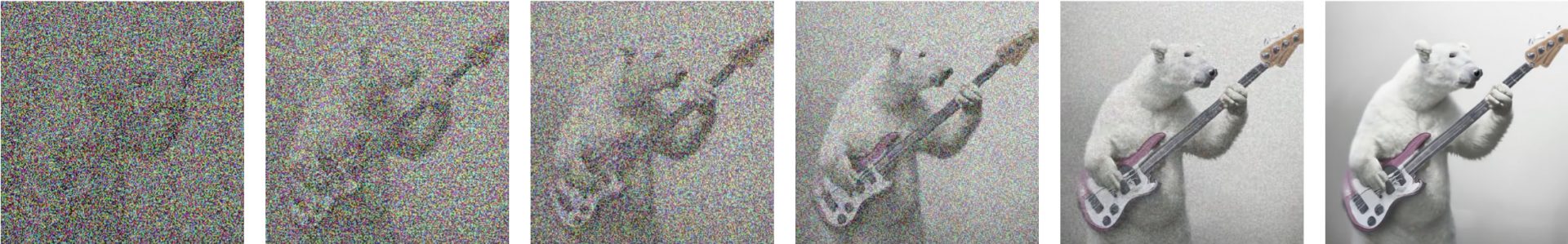

In 2021, diffusion-based algorithms were made accessible to the public. In this process, noise (randomness) is added to training images and neuron models are asked to clean up these images with the guidance of a text description. After a lot of training, these models get so good they can “clean up" images which are pure noise. They are dreaming something into the grey fog like we would see shapes in a cloudy sky.

Here is an example of this process:

Great explanation of LLMs (ChatGPT / GPT-X)

The Financial Times did a great job visualising the mechanics of large language models (LLMs).

Easy explainer for text-to-image

DALL•E 2

Deep dive: How does a neural net work?

Deep dive: How diffusion algorithms work

Stay funky.

Want to keep reading? I'll let you know when there's something new and promise to simply follow my own curiousity. Are you up for a surprise?

Let me know

what you

think.

Old School

PGP Public Key, Fingerprint: 0x1A3A5140C3C3CEFD

Hagen Plum

Tegeler Straße 24

13353 Berlin, Germany

and

and